Data workflows and pipelines are an integral part of bioinformatics. However, the tools used to write and deploy workflows in bioinformatics are different from tools used for similar tasks in data engineering. In this post, I’ll lay out (my opinion on) the reasons for separations in these fields, and speculate on where bioinformatics is headed in the future.

What is a bioinformatics workflow?

A bioinformatics workflow is a series of programmatic steps to transform raw data into processed results, figures, and insights. A workflow can consist of many steps, each involving different tools, parameters, reference databases, and requirements. For example, a bioinformatics workflow I developed at Loyal transforms raw sequencing data from each sample into a DNA methylation profile. This workflow has about 10 steps, uses several different open source tools, and requires the canine reference genome in addition to the raw data input.

The complexity of these workflows, along with the requirement for different programs and resources at each step, necessitate the use of “workflow managers.” These tools orchestrate the processes, dependencies, deployment and tracking of large bioinformatics pipelines.

Individuals with data engineering experience at tech companies are always surprised when they hear about the ecosystem of bioinformatics workflow managers – the set of tools is almost completely disjoint from the big data workflow tools they’re used to. Why then, should scientists use a bioinformatics-specific workflow manager? I have found three reasons for this separation:

- Differences in data type, shape and scale

- Differences in programs and tooling

- Community support behind bioinformatics workflow managers

First, which tools are used in bioinformatics and data engineering?

There are several popular bioinformatics workflow managers. A non-exhaustive list includes Nextflow, Snakemake, common workflow language (CWL), and workflow description language (WDL). These workflow managers all provide the necessary capabilities of data provenance, portability, scalability, and re-entrancy. For a more thorough review, see (Wratten et al. 2021).

In data engineering, several graph-based workflow managers are used to run tasks based on a schedule or dependencies. These include Airflow, Flyte, Dagster and Prefect. These tools are simple to wire up to databases and other cloud compute services, and easily scale to manage millions of events.

Differences in data type, shape, and scale

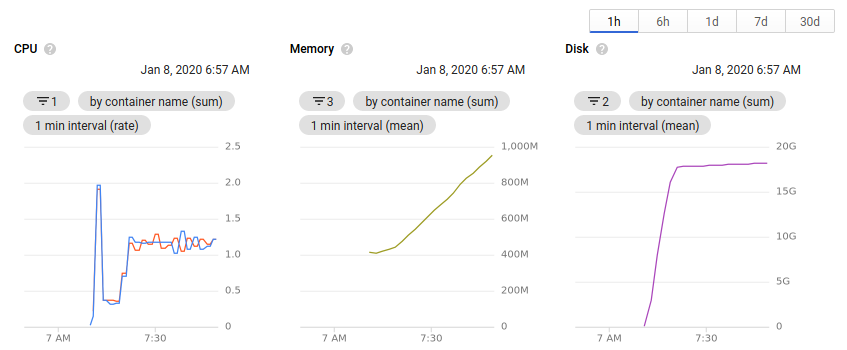

In bioinformatics and data engineering, the type, shape, and size of data are different. Most genomic data is stored in large compressed text files, often reaching several gigabytes per sample. The total number of samples is often limited due to constraints. Individual steps in a bioinformatics pipeline commonly take files as inputs and produce files as outputs. Each step can have different compute, memory and disk space requirements. Databases are rarely used to store results.

In contrast, data engineering workflows may consist of processing millions of events from an application, transforming images from user input, or ingesting logs from across an application stack. Data is more likely to be stored in databases, individual processing steps may be simpler and better suited to serverless architecture, and total numbers of inputs and outputs may be higher.

In short, a bioinformatics workflow may process data from 1000 samples, where the input is compressed text files, each 4Gb in size. A data engineering workflow may process 20 million images, each 200kb in size. The total amount of data flowing through the pipeline is the same, but the needs for each use case can be drastically different.

| Bioinformatics | Data Engineering | |

|---|---|---|

| Size of data files | Large | Small |

| Data type | Compressed text, proprietary formats | Common formats (text, images, etc) |

| Number of data files | Small | Large |

| Compute Intensity per step | Medium to large | Small to medium |

| Store results in databases? | No | Yes |

Differences in programs and tooling

Bioinformatics pipelines are often built by stringing together many command line tools. These tools may have different installation methods and incompatible dependencies. Bioinformatics workflow managers solve these problems by allowing for a separate environment definition or container in each step. Finally, analysis steps may be written in different scripting languages, such as Python, R, or MATLAB, all of which need to be accessible to the workflow manager.

In contrast, data engineering workflows are primarily written in a single language, which is used to define both the workflow structure and the data processing steps. For example, Dagster is written in Python and only has weak extension support for other languages.

Community support of bioinformatics-specific workflow managers

Another advantage of using a bioinformatics-specific workflow manager are the strong communities that have been built around these tools. Nextflow-core is the most active, but similar groups exist for snakemake and CWL. In nf-core, you can join thousands of scientists working on similar problems, use pipelines developed and maintained by the community, and ask for help on GitHub or Slack. Even if the community-developed pipelines don’t solve your problem exactly, they can be a great starting point for further customization. Science is all about standing on the shoulders of giants, so why should you re-implement a pipeline in airflow when it already exists in nf-core?

An example bioinformatics workflow

The nextflow-core RNA-Seq workflow is a community-developed pipeline for conducting all the steps in an RNA-Seq analysis. Starting with raw DNA sequence data in the FASTQ file format, the data will go through QC, alignment to the reference genome, quantification, and differential expression calculation. This pipeline has been developed over many years and has 3700+ commits on GitHub. The default workflow uses several different programs and has 20 steps – adopting this workflow is a guaranteed way to get results faster than writing everything from scratch.

What about scale?

Nextflow workflows should scale to millions of samples, as long as sufficient compute resources are available. For example, 23andMe uses nextflow for processing genetic data from customers. However, bioinformatics workflow managers may not be the best choice when biological data shifts into the shape and scale typically managed by data engineering workflows. I’m thinking most concretely about Ginkgo Bioworks, which processes terabytes of sequencing data through their pipeline each day. The individual files processed are much smaller – jobs may take seconds to run instead of hours. Ginkgo eventually settled on a workflow composed of Airflow, Celery, and AWS batch. Efficiency is paramount at this scale, and a whole data engineering team contributed to Ginkgo’s solution. Most biotech companies and academic labs are better off using Nextflow or another bioinformatics-specific workflow manager, which can be deployed by a single scientist.

Where is the field headed?

After working in bioinformatics for 10 years now, I have a few ideas about where the field is headed. I’m open to being wrong on any of these predictions, let me know in the comments!

- Bioinformatics-specific workflow managers will stick around for the foreseeable future. The most powerful argument for this is the activity and excitement in communities like nextflow-core.

- Nextflow is the best choice for doing bioinformatics at scale in 2022.

- Cloud is the future, but it’s still challenging to manage a team doing bioinformatics in the cloud.

- A large part of this is that scientists are trained working on local computers or university-built HPC clusters. The tools to abstract away the complexity of cloud computing for scientists do not exist yet.

- A more advanced and easier to use workflow manager will be developed that overtakes nextflow in popularity and community support.

- It will be written in python, not a clunky DSL or obscure language like groovy.

- It will natively support execution in multiple cloud environments, intelligent resource usage, and smooth logging and debugging.

- It will have an optional graphical interface for pipeline design and monitoring.

- It may have already been started, as Redun satisfies many of these criteria.

Conclusion

Computational biologists and bioinformaticians often use domain-specific workflow managers like Snakemake, Nextflow, and CWL. To someone with a data engineering background, this may be confusing, as well-developed and efficient workflow orchestration tools already exist. Digging deeper, the differences in data type/scale, tooling, and bioinformatics-specific communities reveal strong reasons for choosing a bioinformatics-specific workflow manager, even at the highest scale.

References

- Wratten, L., Wilm, A. & Göke, J. Reproducible, scalable, and shareable analysis pipelines with bioinformatics workflow managers. Nat Methods 1–8 (2021) doi:10.1038/s41592-021-01254-9.